Research & Articles

Sharing what the data shows us.

The DBIR Confirms the New Bottleneck in Security: Not Pointing, but Prioritizing

The 2026 DBIR is out, and vulnerabilities are the name of the game. Empirical is proud to have contributed critical research to this year’s report.

EPSS V5 Is Here

It’s an exciting day at Empirical: we’re releasing V5 of EPSS, the scoring system that our team helped create and which was recently recommended by Anthropic to help teams prepare for an AI-accelerated tsunami of vulnerabilities.

Anthropic Is Right About EPSS. That Still Leaves the Hard Part.

Anthropic’s security team recently gave defenders sensible advice for an AI-accelerated world, which is to patch KEV then use EPSS to prioritize the rest. The team wrote: “EPSS provides a daily-updated probability that a given Common Vulnerability and Exposure (CVE) will be exploited in the next 30 days. Patching the KEV list first and then everything above a chosen EPSS threshold will help you turn thousands of open CVEs into a manageable queue.”

The Knowing Machine

Dan Geer posed the right question at Black Hat in 2014: are vulnerabilities sparse or dense? If sparse, you can patch your way to safety. If dense, patching without prioritization is the myth of Sisyphus, but the boulder is growing in size. Eleven years later, writing with Dave Aitel in Lawfare, he conceded the terms and moved to the next problem. What the industry needs, he argued, is a "knowing machine" that converts hazards into risks, not a "pointing machine" that merely enumerates flaws and screams equally at all of them.

The 500 Organization Reality Check

This is part five of our series on empirical exposure management. Data from 500+ organizations in P2P Vol. 8 showed that 95% of enterprise assets already carried a top-tier exploitable vulnerability when the CVE firehose was 18,000 a year; at 48,000+ in 2025 and climbing, that exposure has only compounded while remediation capacity has not.

Using ServiceNow? We’ve got you covered.

Empirical Security’s new ServiceNow Vulnerability Response app brings predictive exploit intelligence directly into ServiceNow workflows so security teams can move from reactive vulnerability management to forward-looking remediation.

When Headcount Doesn’t Help

The practical implication is a reframe of how programs are governed. Most teams measure remediation volume—tickets closed, patch compliance rates, mean time to remediate. These metrics describe throughput. They do not measure the marginal risk reduction of each remediation slot. Under constrained optimization, what matters is not how many findings you close but whether each slot is allocated to the vulnerability that reduces exploitability probability the most at the margin.

Say Goodbye to Kenna — Say Hello to Local Models at Scale

Last week, Cisco announced the End of Life of Cisco VM (Kenna Vulnerability Management), a company and product I spent the better part of 13 years building. Needless to say, this brought me through the whole range of emotions, but it also served as a great way to reflect on that time.

New Features: Critical Indicators & Known Exploitation Calendar Heatmap

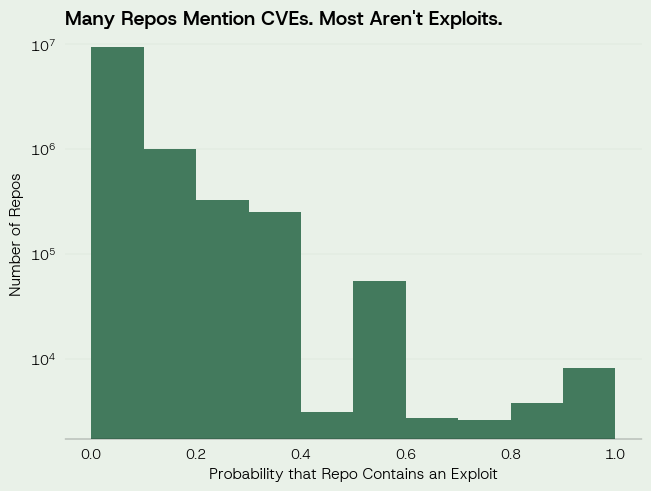

We built critical indicators to explain the reasoning behind any CVE’s Empirical Score (0% - 100% real-world exploitation risk). Every CVE we analyze is modeled against over 2,000 data points. We took these model weight contributions and grouped them into the following categories: Chatter, Exploitation, Threat Intelligence, Vulnerability Attributes, Exploit Code, References, and Vendor.

Risk Model Slop

In cybersecurity risk scoring, “risk model slop” is the quiet but widening gap between what a probability means in a model and how vendors distort it once it leaves its original calibration.

Local Models vs Global Scores: Why Context Isn’t Enough

Context improves interpretation; local models improve decisions. If you want autonomous, defensible security actions, invest in a model that learns your environment and keeps learning as it changes.